Pi Wars 2017 – Carry on follow that line…..

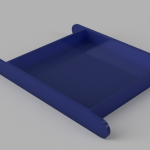

Phil Willis : February 24, 2017 11:39 : PiWars 2017While there has been lots of cutting and 3D printing to do, Phil had also agreed to code the line follower challenge. With TractorBot I we had experimented with the Open CV computer vision software but the results were disappointing. However, with Pi 3 power we thought we might be able to get a Pi Camera to perform the line following duties at reasonable frame rates. Initial trials were encouraging and Keith designed a servo controlled camera case for the front of TractorBot.

The idea being that when the line follower choice was made from the menu, the servo would activate and the line would be illuminated by a Blinkt in the nose. After a few attempts and a lot of tuning we were happy with the result. https://youtu.be/Yrbg0VhtKmk

This is a graphic (sorry) illustration of how far the Pi has developed in just a few years.

Pi Wars 2017 – Boards….

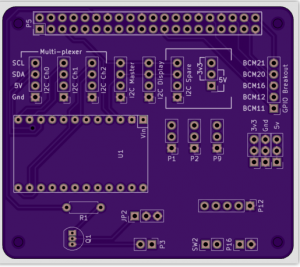

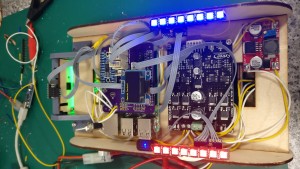

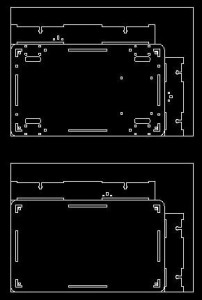

Phil Willis : February 23, 2017 13:23 : PiWars 2017We left our robot looking like a moving nest of wires, so what to do about it. Keith’s beautiful 3d renders always miss out the wiring that is a necessary evil when putting one of these together. Thankfully Keith was also able to come up with a solution! Our own interface PCB to get rid of a fair number of wires and sort out the build. Ki-CAD to the rescue. Keith produced some boards to support the Blinkts we were using as well as a main board to fit HAT style on the Pi.

- Output from Ki-CAD

- Boards have arrived, thanks OSH Park

- Close up of the main board

After fitting the various header pins and ribbon cables it really does look the part, much neater.

Pi Wars 2017 – In which Jon goes shopping

Phil Willis : February 17, 2017 19:54 : PiWars 2017The third member of the team, Jon, had taken the lead on the software design. He started with our original TractorBot 2015 software and restructured it, to allow better collaboration via GitHub. Jon has created several useful libraries for the Pi and if you are interested you should check out his github page. https://github.com/leachj

Jon already had some good ideas for how to give the robot senses to allow it to navigate the maze, and so purchased some Time of Flight sensors. We used these magical I2C devices in 2015 to keep our speeding robot from crashing into the walls when it came to the straight line challenge. Don’t just take our word for it…

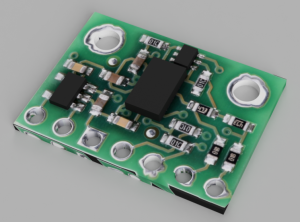

The VL6180X Time of Flight Distance Ranging Sensor is a Time of Flight distance sensor like no other you’ve used! The sensor contains a very tiny laser source, and a matching sensor. The VL6180X can detect the “time of flight”, or how long the laser light has taken to bounce back to the sensor. Since it uses a very narrow light source, it is good for determining the distance of only the surface directly in front of it. Unlike sonars that bounce ultrasonic waves, the ‘cone’ of sensing is very narrow. Unlike IR distance sensors that try to measure the amount of light bounced, the VL6180X is much more precise and doesn’t have linearity problems or ‘double imaging’ where you can’t tell if an object is very far or very close.

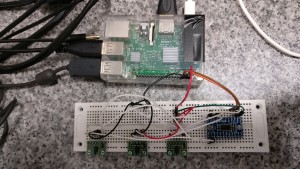

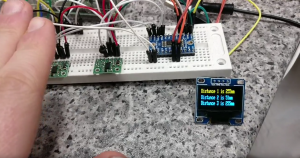

Jon set to work improving his VL6180 sensor library and bread boarded 3 sensors using an I2C multiplexer unit from Adafruit to complete the test rig. While his credit card was in his hand he also purchased a small OLED display so we could select the current challenge mode. It’s a really cute display and very clear, just wait until you see it in real life. The menu system even has tiny icons!

While Keith’s beautiful renders look fantastic, the reality is that once you start hooking up the wiring what was a sleek machine begins to look more like a rats nest.

What to do about all those wires…

Pi Wars 2017 – I love the smell of burning ply!

Phil Willis : February 16, 2017 19:38 : PiWars 2017Well we have a design beautifully modeled in Fusion 360 by Keith our designer extraordinaire.

Time to make it real……

No you’re not seeing double, our secret weapon is two robots! Since both Phil and Jon will be working on different challenges, it was decided to create two robots so the software could be developed in parallel.

We now had our chassis and motors, time to add a Pi and motor controller and let the magic happen.

At least we had something that moved!

Pi Wars 2017 – Motors!

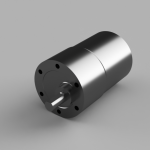

Phil Willis : February 15, 2017 20:29 : PiWars 2017We were in the running. Our first choice and one of the most difficult always seems to be motors. With last year’s bot being too big we needed smaller motors and were initially drawn to these tiny metal geared ones from Pimoroni.

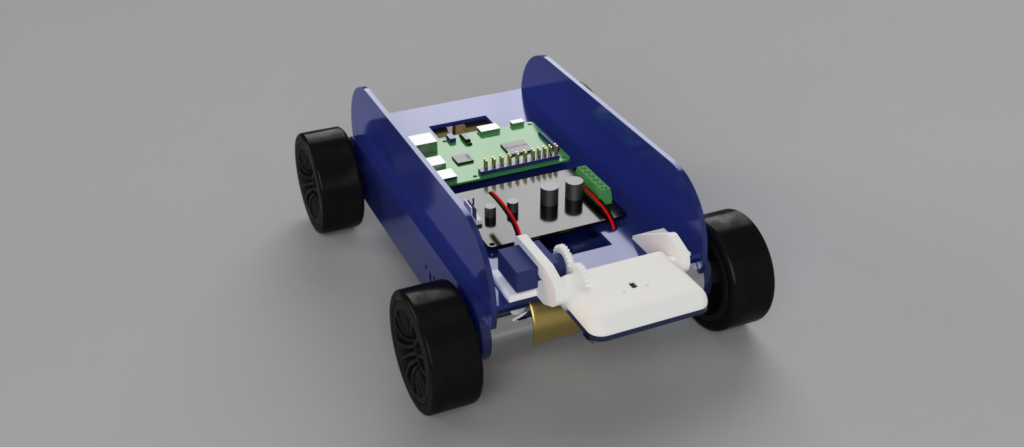

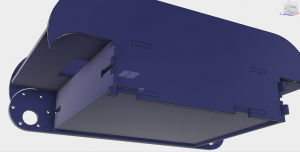

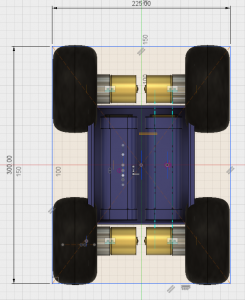

Keith’s ability with Fusion 360 allowed rapid prototyping of new robot chassis made from plywood, some 3D printed brackets, and testing of a new bot began.

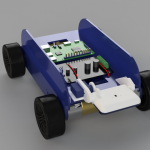

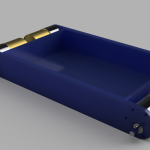

Unfortunately, the small motors were not up to the job and we needed a larger power plant. The motors eventually chosen were much beefier but that dictated the size of the final bot. With the new motors, Keith was able to model the components in Fusion.

Motors and batteries.

The size of the motors and our existing battery packs pretty much determined the size of the new robot chassis.

- Fusion 360

- Fusion 360

- Fusion 360

So now we have a chassis and Phil can get on and laser cut the next prototype…